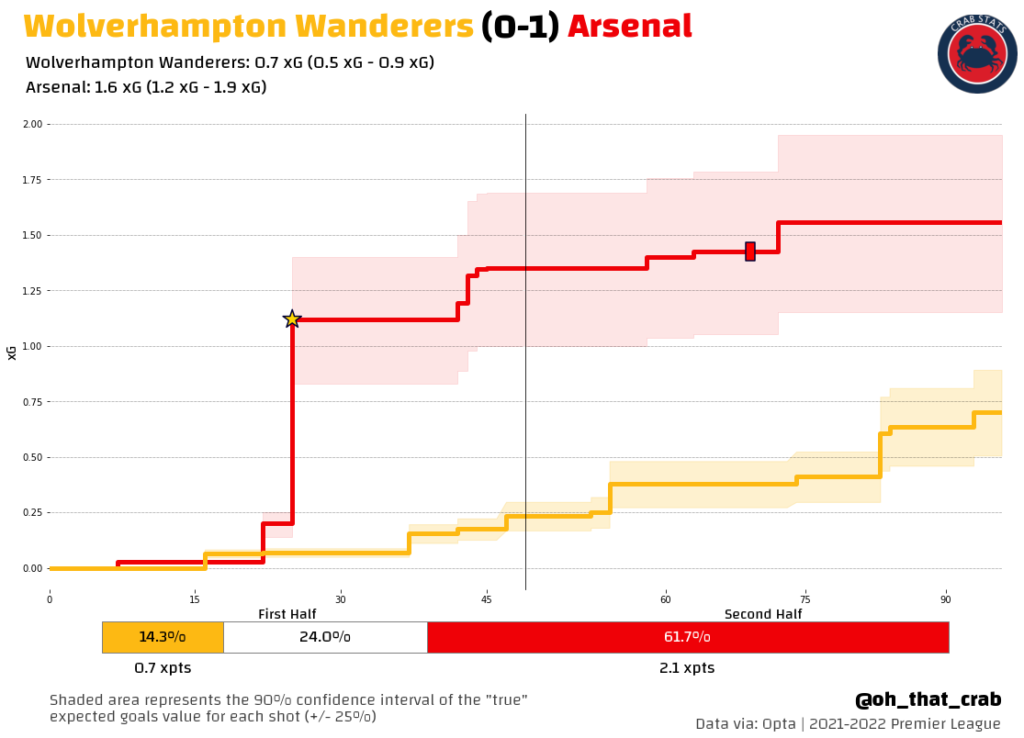

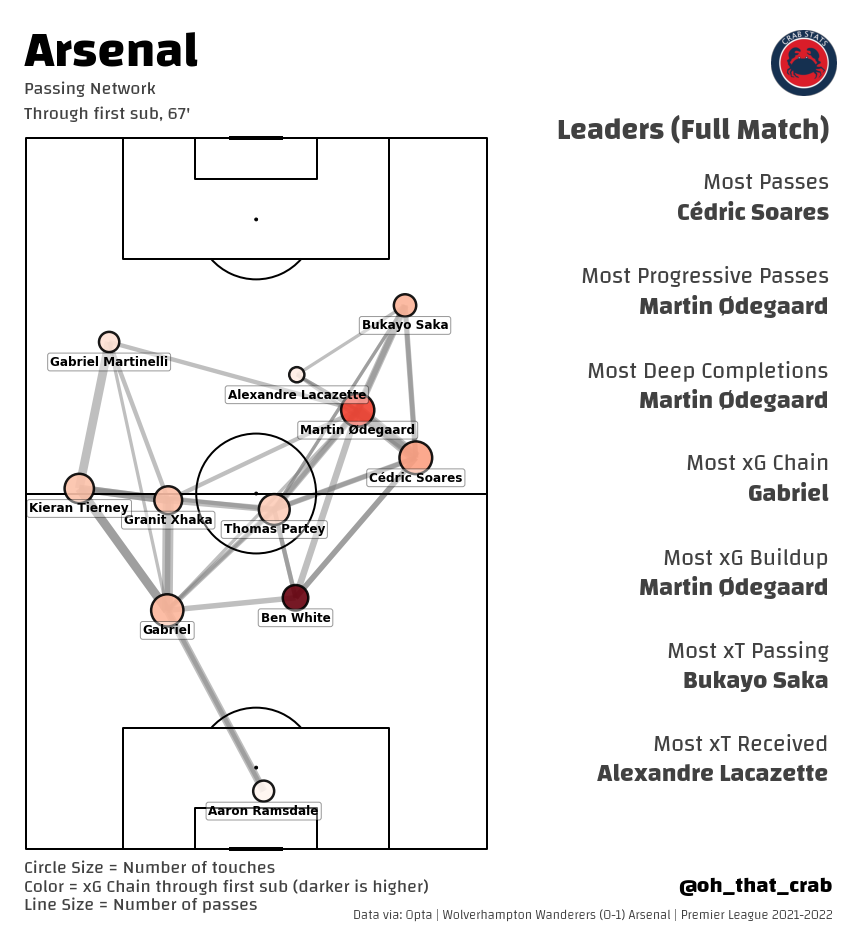

When I was doing my pre-match preparations I came away worried that this was going to be a defensive slog. Wolves were rated as the 5th best defense with Arsenal rated as the 4th best. In the end that is how things played out, with Arsenal creating just enough to score.

It was an important win for Arsenal and capped a very good match week for Arsenal’s top four chances.

Wolves 0-1 Arsenal: By the graphics

Wolves 0-1 Arsenal: By the numbers

7 – Shots from Open Play for Arsenal. Arsenal average 10.8 per match this season.

0.5 – Expected Goals (xG) from Open Play for Arsenal. Arsenal average 1.1 open play xG per match this season.

10 – Shots from Open Play for Wolves. Wolves average 7.6 per match this season.

0.6 – Expected Goals (xG) from Open Play for Wolves. Wolves average 0.8 open play xG per match this season.

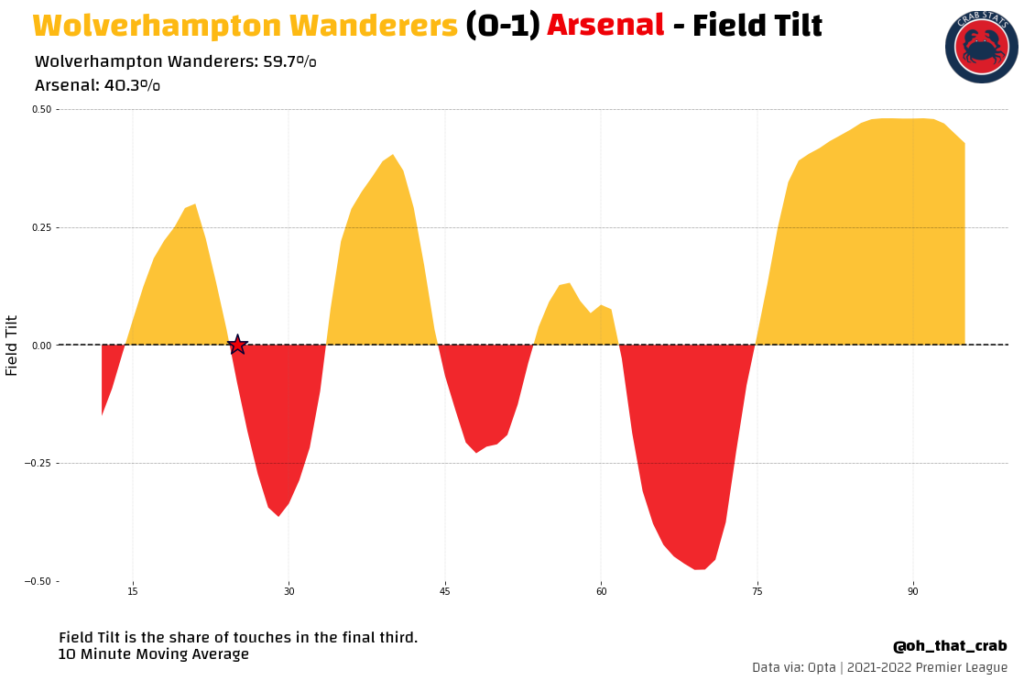

Like I said in the preamble this ended up being a pretty defensive slog with two good defenses and an okay attack (Arsenal) against a not very good attack (Wolves).

41 – Clearances made by Arsenal, the most Arsenal have made in a match this season. The previous high for Arsenal this season was 33 against Watford.

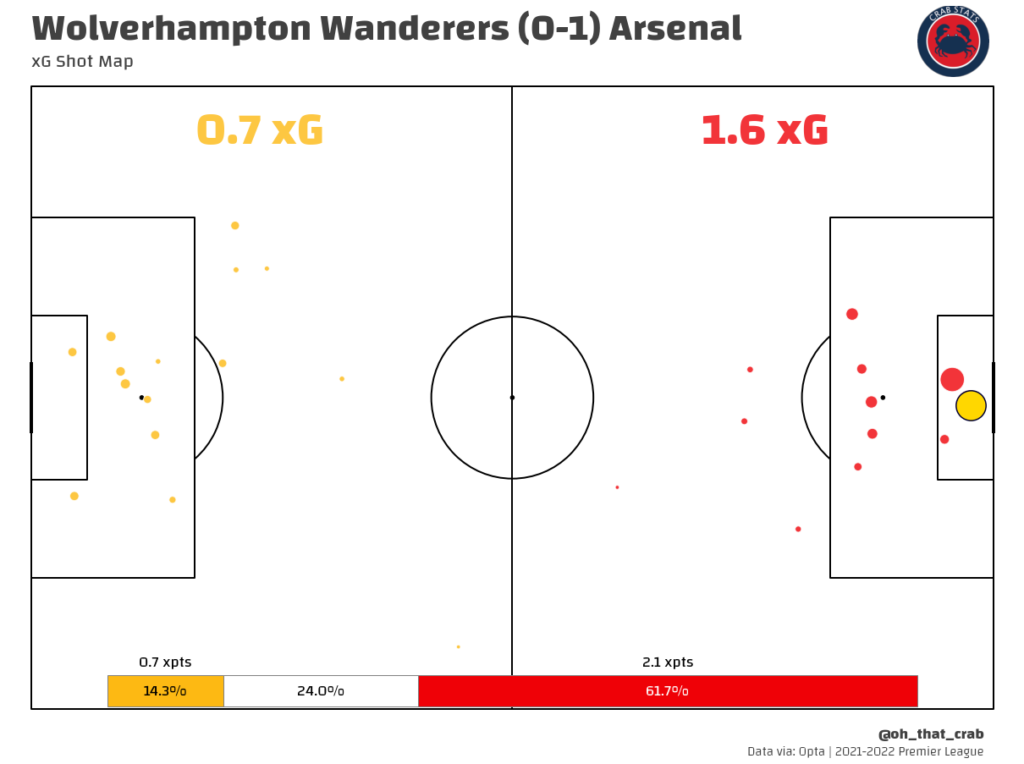

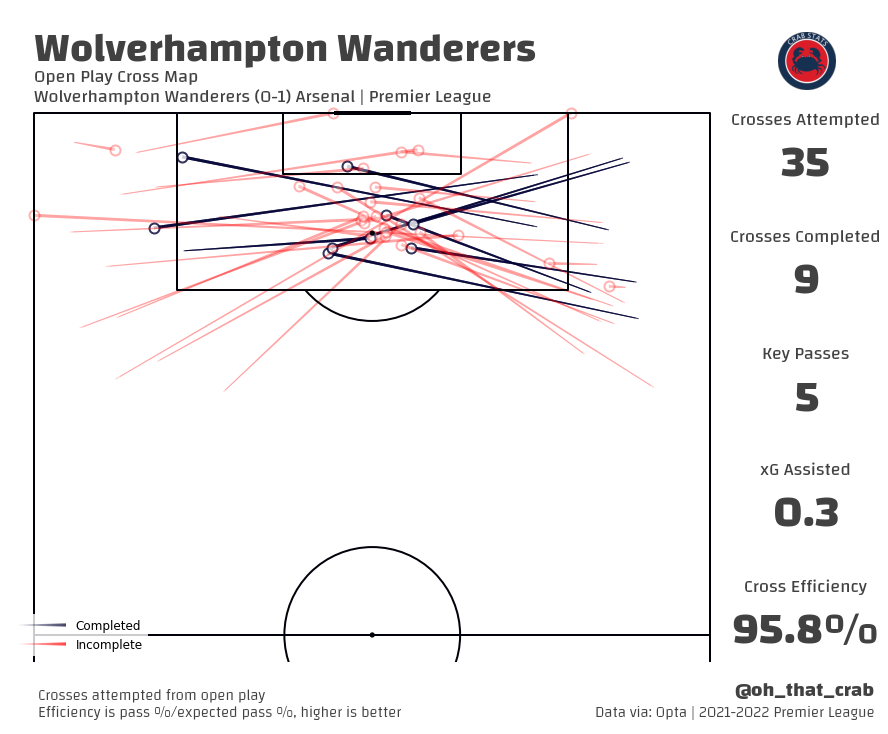

42 – Crosses attempted by Wolves in this match. 35 of which came from open play cross attempts.

8 – Passes completed in the box by Wolves.

2 – Passes completed in the box that were not crossed.

One of the things that Arsenal did well in this match, especially after the Red Card was to limit access to the Penalty Box. In the end, Arsenal allowed 32 touches in the box, which is a bit worse than average (24 per match) but given that Arsenal were playing with 10 men it was a commendable performance.

More important for me was that they forced Wolves into the dreaded cross attack strategy. Mikel Arteta anticipated this threat well with the substitution of Rob Holding coming on in the 71st minute. Here is how Rob Holding did in those 20+ minutes.

9 – Clearances, led all players

6 – Headed Clearances, led all players

1 – Interception

1 – Blocked Shot

3 – Aerial Duels won

100% – Aerial Duel success rate

0 – Passes attempted

The perfect defensive performance. He came on and was the perfect player to stick in the center of defense.

2 – Goals in 2022 for Arsenal.

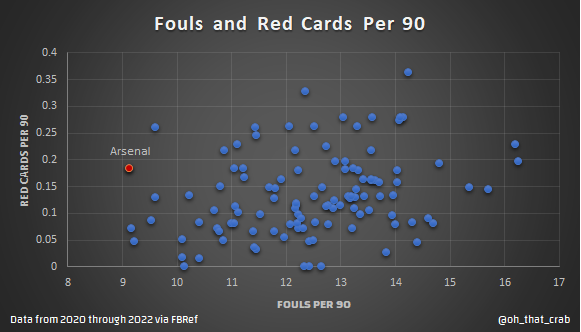

4 – Red Cards in 2022 for Arsenal.

141 – Minutes Arsenal have played in 2022 with 10 men. Arsenal have played just 568 minutes total in 2022.

25% – Percentage of minutes played in 2022 down a man.

103 – Minutes total that Arsenal have played with a man disadvantage in the Premier League this season, the most of any team.

23 – Minutes total that Arsenal have played with a man advantage in the Premier League this season, the 5th fewest (there are three teams that have 0) of any team.

Arsenal have been one of the more punished teams in terms of red cards. I am not one to think that there is a conspiracy going on here because if you look at the vast majority of the red cards that Arsenal have gotten in isolation they are justifiable. One of the things that does seem to happen, and perhaps is because I pay the closest attention to Arsenal, is that it doesn’t feel like Arsenal are often given the benefit of the doubt when there is room for subjectivity.

What happened in this match feels like a great example of this. Gabriel Martinelli I think made two fouls that were worthy of yellow cards. He tried to slow down a fast throw-in, then got frustrated that he failed and took down the player in a way that was much more obvious then it could have been. I think Michael Oliver generally had a good match refereeing but he also got caught up in the moment in this situation.

What makes this one especially weird is that you pretty much never see two yellows in the same sequence of play, it has happened before but it is very rare. It is these types that seem to happen to Arsenal more often (it is possible that feels this way because I focus most on Arsenal and that’s my frame), things that are within the laws of the game but are not generally enforced. I don’t know what to make of it, the red card was earned by Martinelli but still feels like it was unfair because you never see that happen.

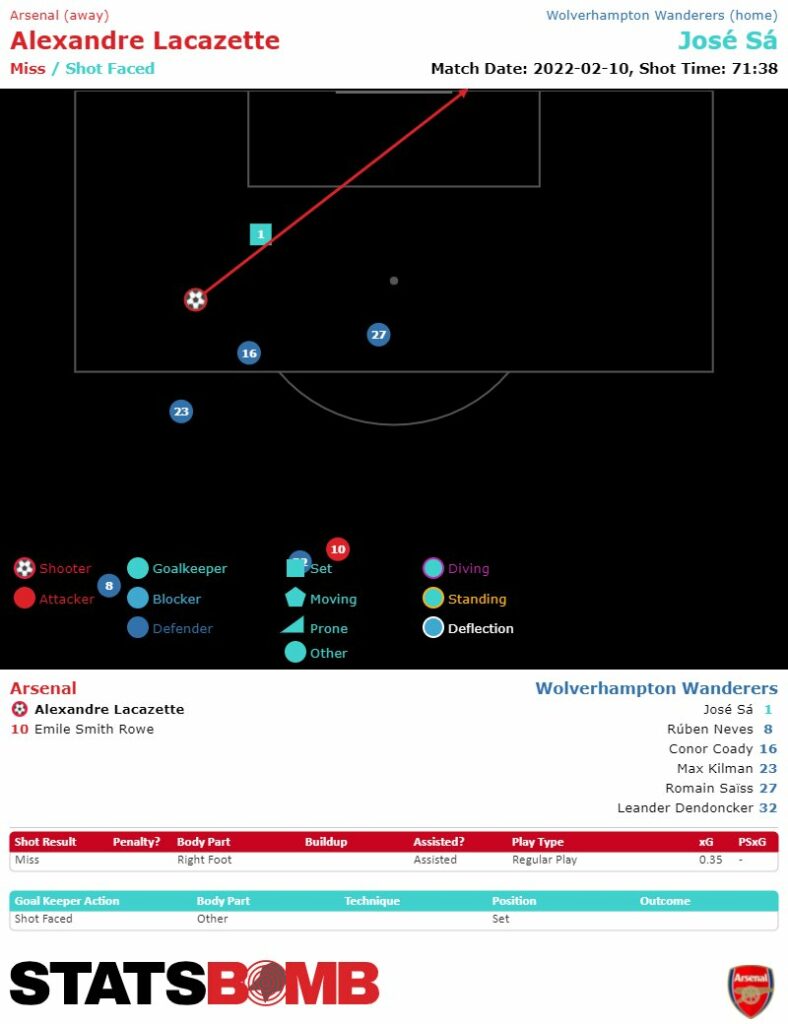

The Lacazette Chance

12% – The probability of a goal being scored by my expected goals model

7% – The probability of a goal being scored by the Understat expected goals model

26% – The probability of a goal being scored by the Wyscout expected goals model

35% – The probability of a goal being scored by StatsBomb’s expected goals model

I don’t love looking at individual chances and going by just what the models of the expected goal say. The reason for this is that trying to measure the “true” probability of a single chance is really hard and factors that aren’t measured can have a big effect on a single chance. It is important to think about the value that is reported for the quality of a chance as the average estimate with a good amount of uncertainty where things can be better or worse.

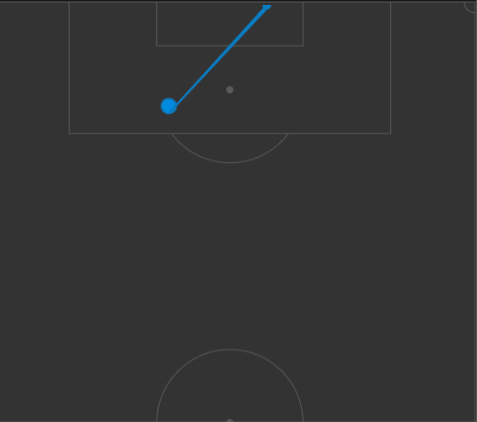

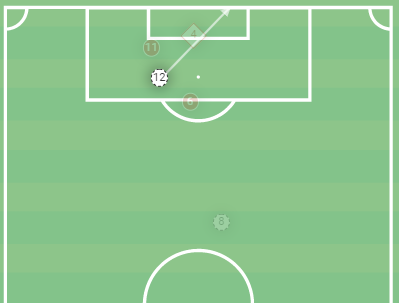

The other thing that adds uncertainty is how accurate we are at measuring where things happen. The three images below will show the same shot but all from slightly different locations on the pitch.

One of the biggest factors that drive expected goals is where a shot takes place. With this big of a difference in locations, there is going to be differences based on just where a person coding a match thinks a shot took place.

What I think we should take away from this is that Lacazette’s chance wasn’t easy, realistically it was probably in the 1 in 4 to 1 in 3 range for being scored and secondly, people who deal with stats should help to do better to communicate the uncertainty of our ratings (this is why I have added the shaded areas to my running xG charts).

@Oh_that_crab

Sources: Opta via Whoscored, StatsZone, Understat, my own database. Statsbomb via FBref. Wyscout.

Laca and the referee could do better

Lacazette’s chance was in the 1 in 4 category? I really don’t understand how this stat is measured. If you put any team’s primary striker in that position (with that much time) sure it’s reasonable to expect them to score more often than not?

Feels that way but that doesn’t match the reality of those kinds of chances. Sa did a good job cutting the angle and staying up. Lacazette probably should have brought the ball infield more to get a better look at goal.

Supporters’ rationality wrt probabilities are pretty much thrown out of the window when you’re looking at the ONE chance we created all game.

If someone comes to you and say “You only have one shot, make it count”, you now believe you should “give your max” and beat the odds.

I agree that all fans will apply a heavy subjective dose of irrationality on just about everything involving their own team. But – and at risk of labouring the point – there is no way that laca should be missing that chance; and there is no way that any striker should think missing it 3 out of 4 times is an acceptable hit rate.

Yes you have to factor the skill of the striker, but also the goalkeeper too. Jose Sa came off his line and positioned himself near perfectly.

Thierry missed a few similar chances in his time, he was one of the best ever at that curler into the far-side netting but it’s a difficult technique – Laca should’ve probably tried to roll it under the keeper instead.

I hear what you are saying but your just wrong.

But do the stats take into account what player it is in that position? Maybe if it’s all players since the dawn of time (or at least since the dawn of stats) in that position it becomes a 1 in 4 chance. But Laca is our central striker, had all the time in the world and only the goalkeeper to beat. There is no way that any team should be happy with their ostensible primary goal scorer missing that chance 75% of the time.

Last comment from me on this because I’m conscious that it will get tedious, but the point about Lacazette cutting inside is a good one and to me makes this stat void. The ball wasn’t dropping out of the air and Lacazette wasn’t forced to hit it from where he did. He was clean through – with so much time and not a defender in sight. That is what makes it such a big chance, and one that a teams’s striker should be scoring more often than missing.

Exactly. The stats dont take into account the chance as a sequence. Only the frame when Laca decided to shot. However a striker with more skill can make it a goal.

The point is that Martinelli was just sent-off. Laca used his (Martinelli’s) vacated space B4 the defenders had readjusted to playing v 10 men. Excellent. Laca had done 98% of the job, his brain said that he’d done the job and relaxed, let his concentration drop ….

I think that highlights an inevitable limitation in statistical analysis of a one off chance. In this instance Lacazette has so much time that we can see that he has a lot of autonomy as to where the shot was taken from and should have taken it in further as you say or possibly around the outside of the keeper. It’s a 25-33% from where he took it but a faster forward with quicker feet turns it into a 50%+ chance, hence Tom’s comment and I’m sure many other’s who didn’t comment! This is what elite strikers do better than… Read more »

Statsbomb’s shot location seems to be the most accurate of the 3. No idea why the other two are so far off.

The ref should have asked for the throwing to be retaken cos it was a foul throw with Martinelli making physical contact with the player when the throw in was made..VAR should have reviewed that cos it’s a sending off and they ought to have seen that according to the rules,opposing players should be at least 2 meters away from the person taking the throw in..but I am not a ref,they should have known better.

I don’t think it was a foul throw, watching back it looks like he got both feet on the ground with ball coming straight over his head. It is one of those by the letter of the law ones. He certainly fouled and interfered on the throw but Oliver played advantage. Martinelli barged Semaedo in the back and that is something worthy of a yellow. In the context of the match, the second one seemed kind of borderline with a couple worse fouls not given yellows for both teams and a few less bad given as yellow. The frustrating part… Read more »

I think it is worth acknowledging, too, that the Wolves player cut back in front of Martinelli at the last second looking for the contact. I think it is also worth questioning the second yellow in light of the law regarding playing the advantage — some people on twitter have posted the law and it seems to imply that Martinelli should not be cautioned/given the second yellow. The bottom line is, which I think everyone agrees with outside of the referee apologists, is that Arsenal pay a tax with these league officials that no other club is subject to —… Read more »

You cannot commit a foul when the ball is not in play. You can, however, commit a cautionable offense (or sending-off offense) when the ball is not in play. So, the only way a referee can punish an offense that occurs before a throw-in is by showing a card (he cannot award a free kick, which is always awarded after a foul). I think this is what Oliver should have done, immediately. A good referee normally wants to avoid rewarding the offending team by preventing the non-offending team from playing quickly, if they want. (The most common situation, other than… Read more »

I should say, other than for time-wasting or dissent.

If we prepare for every match knowing we will get a red card it will not be as bad. We should just set up tactics and training sessions on having 10 men, and if we finish with 11 it can be a sign of progress.

Before the game my money was on Gabriel getting a red card and my friend picked Xhaka. Next game I am picking Pepe, or Ben White.

After the Gabriel timewasting yellow it felt nailed on that there would be a second yellow somewhere. We ended up with the most unusual one.

The fact that Cedric also got away without a yellow card too shows you what a crazy and inconsistent game the ref had. At one point he made a really bad tackle and I almost was certain he was getting a 2nd yellow. Then I realized he didn’t get one yet, and for his crazy foul he just got a talking down from the ref, and then the Martinelie situation. Just total madness!

That’s true, I swore Cedric was about to be sent off

Gabriel was a good bet though 🙂

Pepe? Who is this guy? Never heard of him

Teams do practise attack v defence with outnumbered sides in training.

We practise it repeatedly against real Premiership opposition!

Re the sending off , is being in possession an advantage from a throw in halfway in your own half ? The whole sending off sequence of events were subjective could have gone either way , one(1) yellow or two(2) but with Oliver’s history with Arsenal it was always going to be two(2) .

This was Oliver’s 5th or 6th time he has refereed Arsenal this season which I’m sure can’t be right

The advantage is from where the throw ends up. Semedo is in space running towards goal and yeah I think that is an advantage vs taking the throw again

I am trying to understand the graphic headed Fouls and Red Cards per 90. There are an awful lot of blue dots there, so am I right in thinking this represents more than the Premiership?

What I can see is that Arsenal’s red dot seems to indicate the lowest average of fouls per 90 minutes of all the dots! But some blue dots have more red cards?

What would be nice is a chart with each row showing:

Club | Red Cards | Yellow Cards | Fouls | Handballs

Is this available anywhere?

Yeah maybe I will add a bit more. So that is for the “Big 5 Leagues” each team is a point

Could you do one with each PL team on it, over the last few seasons, with each team appearing several times is once per season. It would be great to see if Arsenal consistently get a hard time and other teams an easy time. Thanks.

If so Arteta could use it as evidence of bias in his meeting with officials.

Yeah I couldn’t get my head round the fact there were so many teams getting more reds than us!

Xhaka has played 7 Premier League games since he returned from injury and has been booked in 5 of them. That’s quite an alarming percentage.

If he gets booked 5 times in the next 10 games (up to and including fixture 32) then that’s an automatic 2 match ban. And that’s probably around the time we have our rearranged fixtures against Spuds, Chelsea, Liverpool.

We’re a much much better team with Xhaka in the starting 11 but Granit if you’re reading, stop getting booked mate. At least until matchday 32.

3 massive points yesterday.

It puts our top 4 destiny in our own hands.

All we need to do is ensure that none of West Ham, Man U and Spurs gets more points than we do between now and May, and we are home and dry.

It’s not that easy considering our two strikers, but our fluid forward line should compensate for that.

COYG!

On the fouls v red cards diagram could we see who the other teams are please? Would be interesting to see if Man U,say, get an easy ride from the referees.

Reading this is like doing the language of Algebra.😃

A lot of folk thought Laca shudda finished this one off.

Thanks for a bit of reality therapy “oh that crab” mate.

It looked to me like a big chance but not a tap in.

Your thoughts on Gabi’s red are honest and fair………..what is galling for Arse fans is how much violence against us is unpunished.

There is a distance in definition of “chance”. When stats say chance consider only what happened. When fans say chance mean that you are alone with the keeper to beat.

He should have scored.

We should have a striker that can score those

People keep putting our red cards down to a ‘lack of didcipline’ when more often they’re more to do with lack of agility or foot speed on Xhaka’s part. However, Matinelli’s actually was to do with discipline. Seconds before the throw in the Wolves sub came through the back of him but the ref gave nothing, Matrtinelli immediately tried to block the throw and in rush of blood to the head scampered after the same Wolves guy to get him back. Without the anger he might have made a more measured challenge. This could be a great lesson for him,… Read more »

Appreciate you explaining the Laccazette chance like that. It does my head in when blogs goes on about how he “should score” all the time but only when it’s Laccazette and always without actually evaluating how good the chance was. On the red cards, you say “if you look at the vast majority of the red cards that Arsenal have gotten in isolation they are justifiable”. Most of them were but I’d argue there are the same amount of ‘justifiable’ red card decision against the opposition that aren’t given though. Just because it’s justified doesn’t mean it’s fair. If he… Read more »

Blogs ‘goes on about how he should score’ because – guess what – he should score.

This is a massive wake up call to the players – and the new captain in particular – that our chance creation and finishing needs to improve drastically if we want top four.

Pretty much what you’d expect from the Laca chance. The reaction to it speaks more to the lack of what Arsenal produced over the game than his profligacy. It’s an odd one to be relitigating because we’ve spent virtually two years now complaining that we can’t judge our strikers for missing when they only get one or two chances a game. By my count Laca had two chances, put one (the higher xG chance) down the keepers throat and the other a foot wide and got an assist. Not a stellar performance but hardly problematic.

Well, it was Only Wolves.

Aha. Sp*rs 🤣🤣🤣🤣🤣

The same Wolves are 2:0 up at White Hart Lane. I love watching the spuds sink without trace! Conte is doing a wonderful job there and to think some were shouting out for him to be Arsenal manager 🤣